Research

My research focuses on human behaviour typically with novel computer systems.

Personalisation Design Cards

This study consisted of the generation of a novel set of ideation cards and getting participants involved with using them to codesign their own personalisation systems. The cards were used in workshops and the data (qualitative) was analysed using a a thematic analysis.

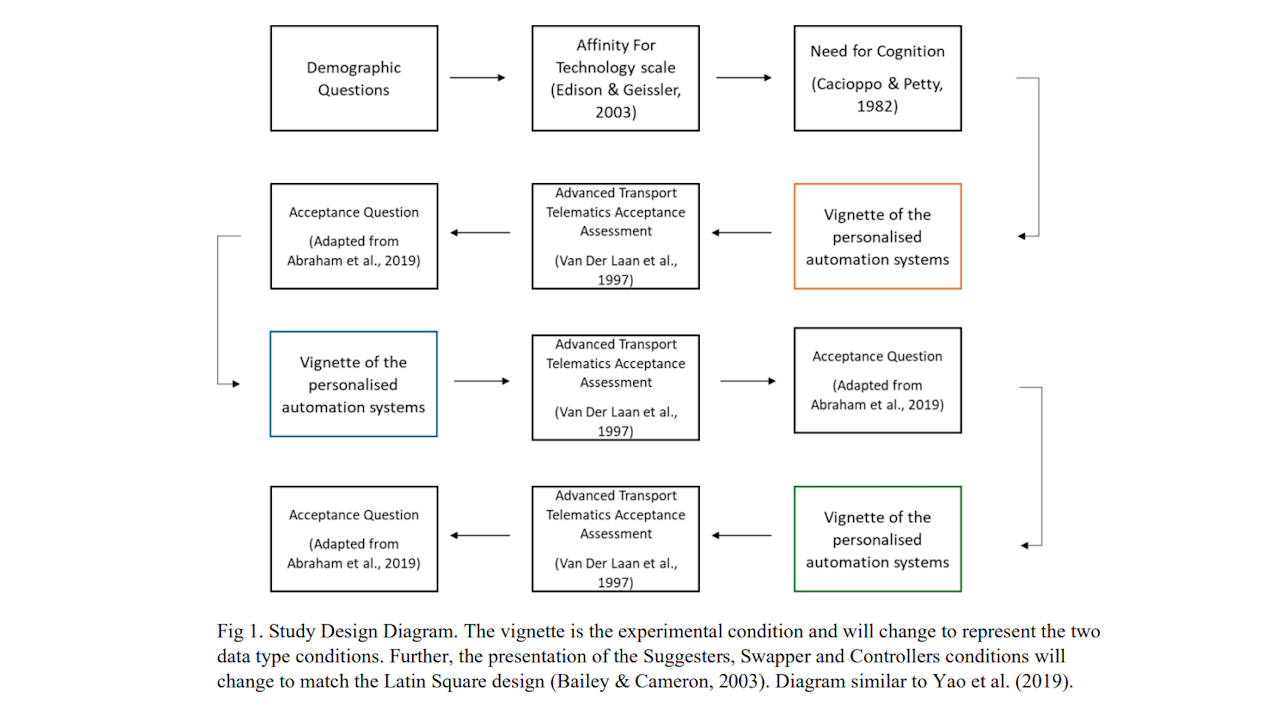

Stakeholder Questionaire

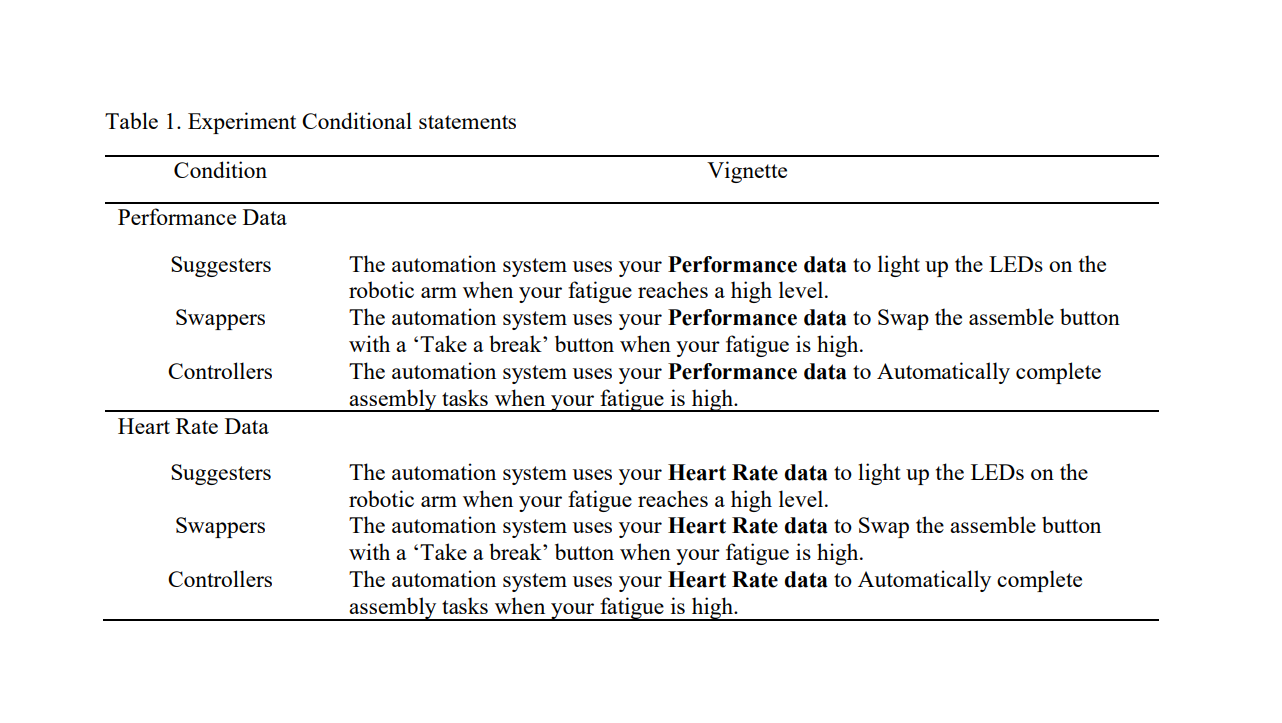

This study consisted of an experimental questionnaire that looked to understand how manufacturing stakeholders felt about personalisation. The questionnaire had multiple conditions with a mixed-subjects design to test different robotic personalisation system examples.

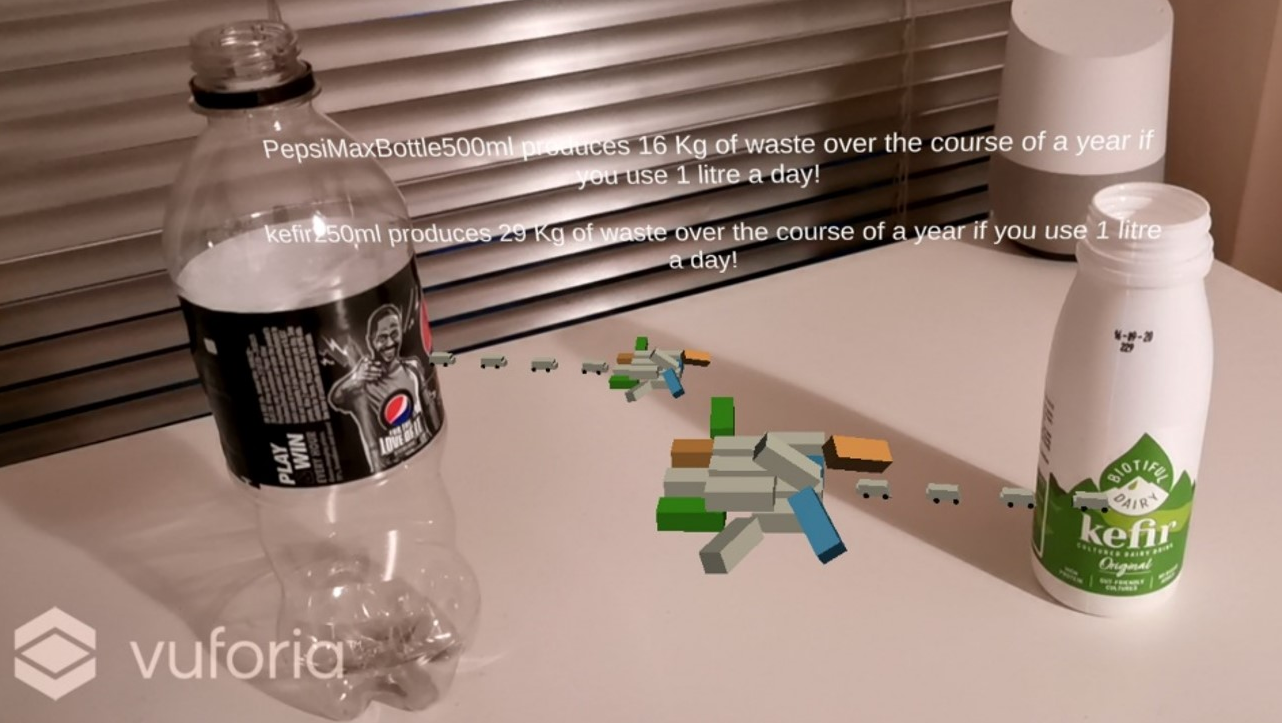

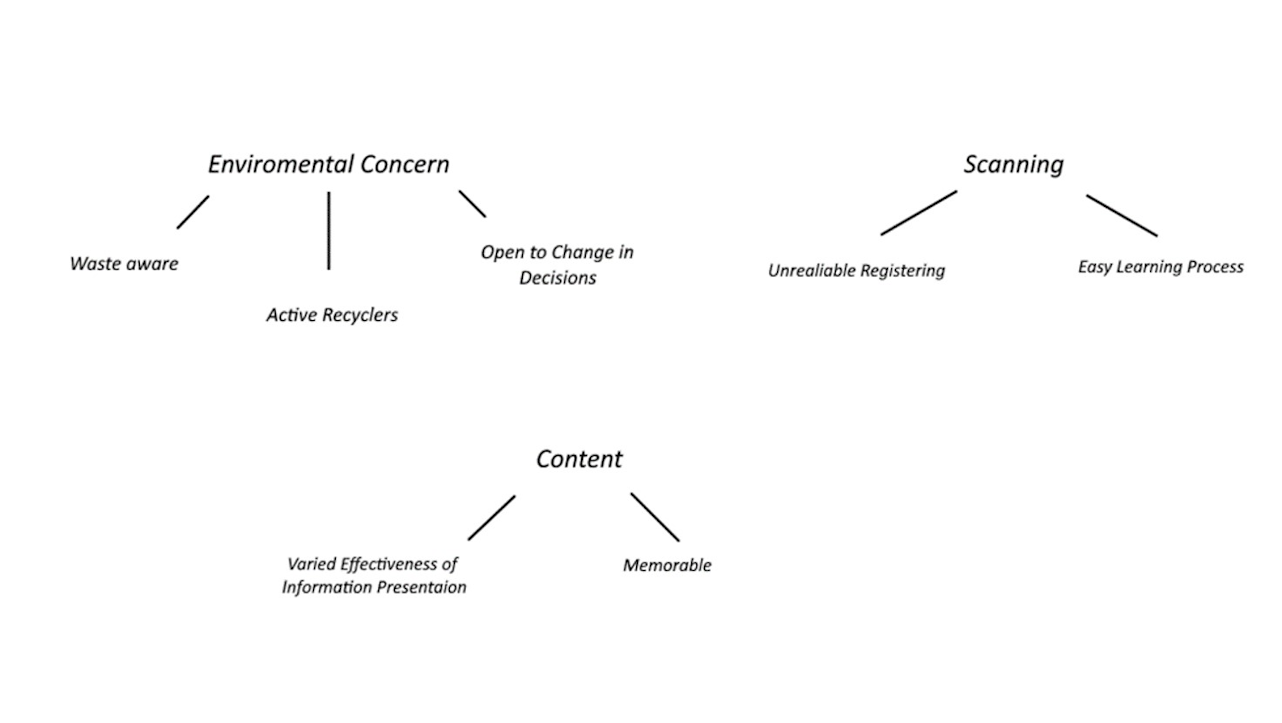

Augmented Reality Smartphone App

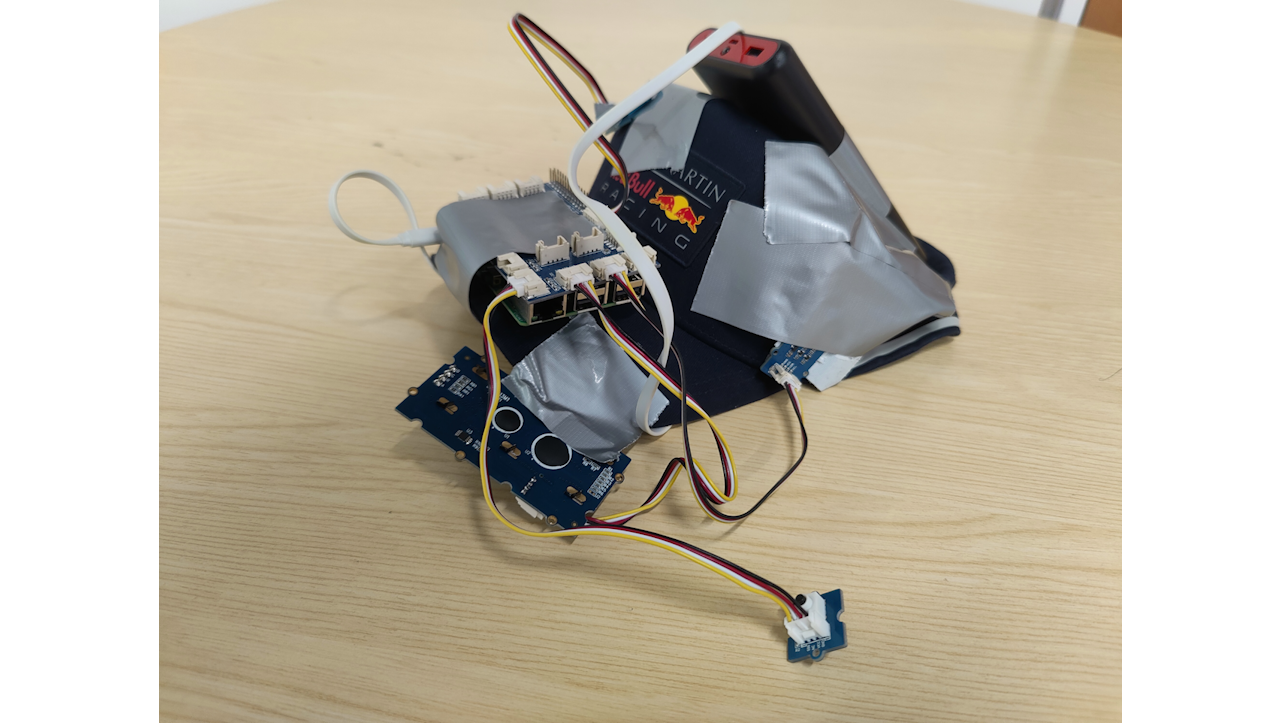

An Augmented Reality smartphone technology probe was selected as the method. The technology probe itself was designed through the use of User Persona and Scenario Techniques, with an iterative design approach. It was implemented using the Unity and Vuforia AR package as a foundation.